next

generation

assistant

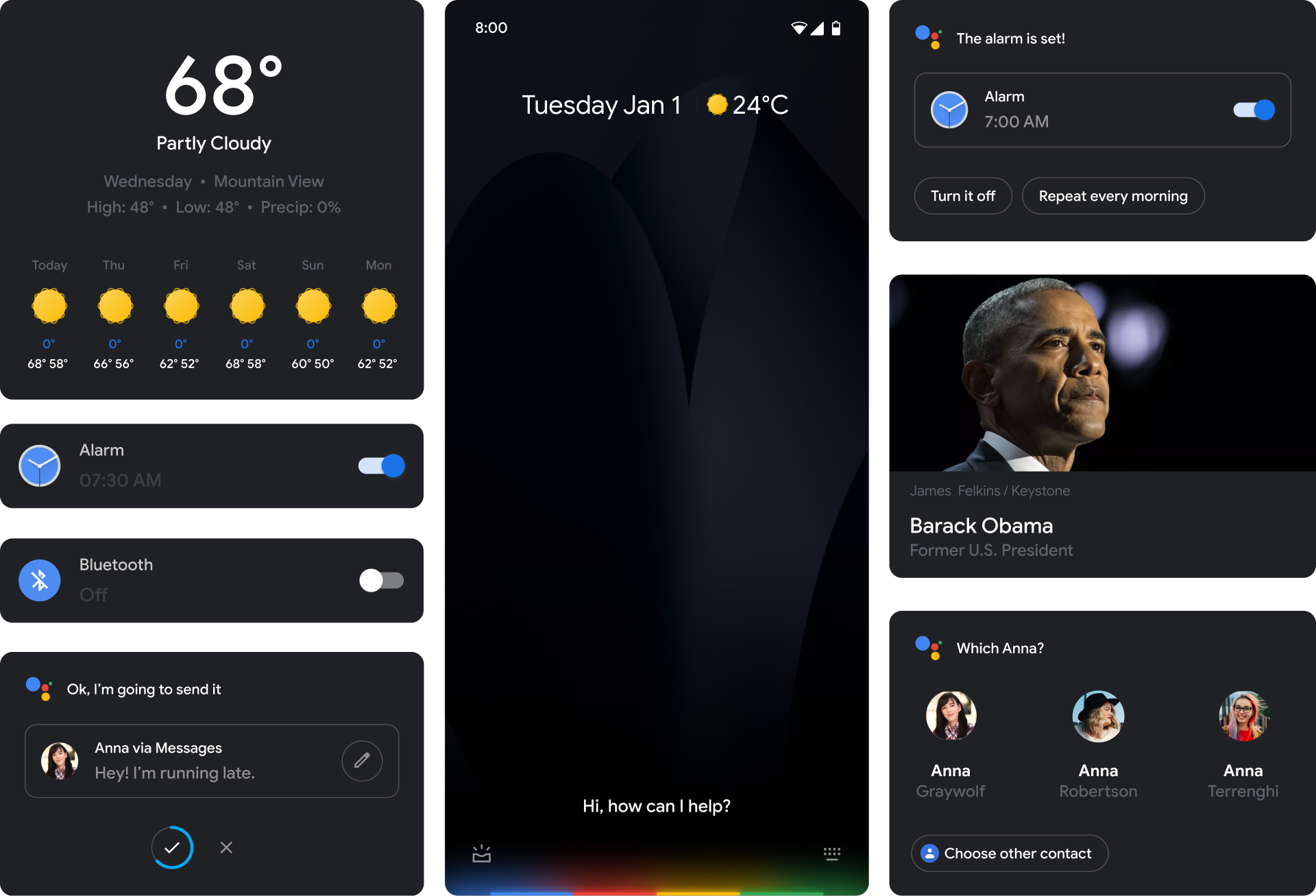

Led the design of the revamped mobile Assistant running exclusively on Google’s flagship phone commonly known as Pixel 4.

Background

NGA takes advantage of on-device AI and dedicated Tensor Processors Units (TPU) to allow for real-time natural language understanding and speech recognition.

In order to get a better grasp of what kind of new use cases live-understanding could unlock, I started building prototypes with simple primitives.

These exercises were insightful as they showed a shift from evolving the way we design interfaces from direct manipulation to semantic manipulation.

It’s not about telling the AI which button to press, it’s about telling it what you want.

Evolving brand

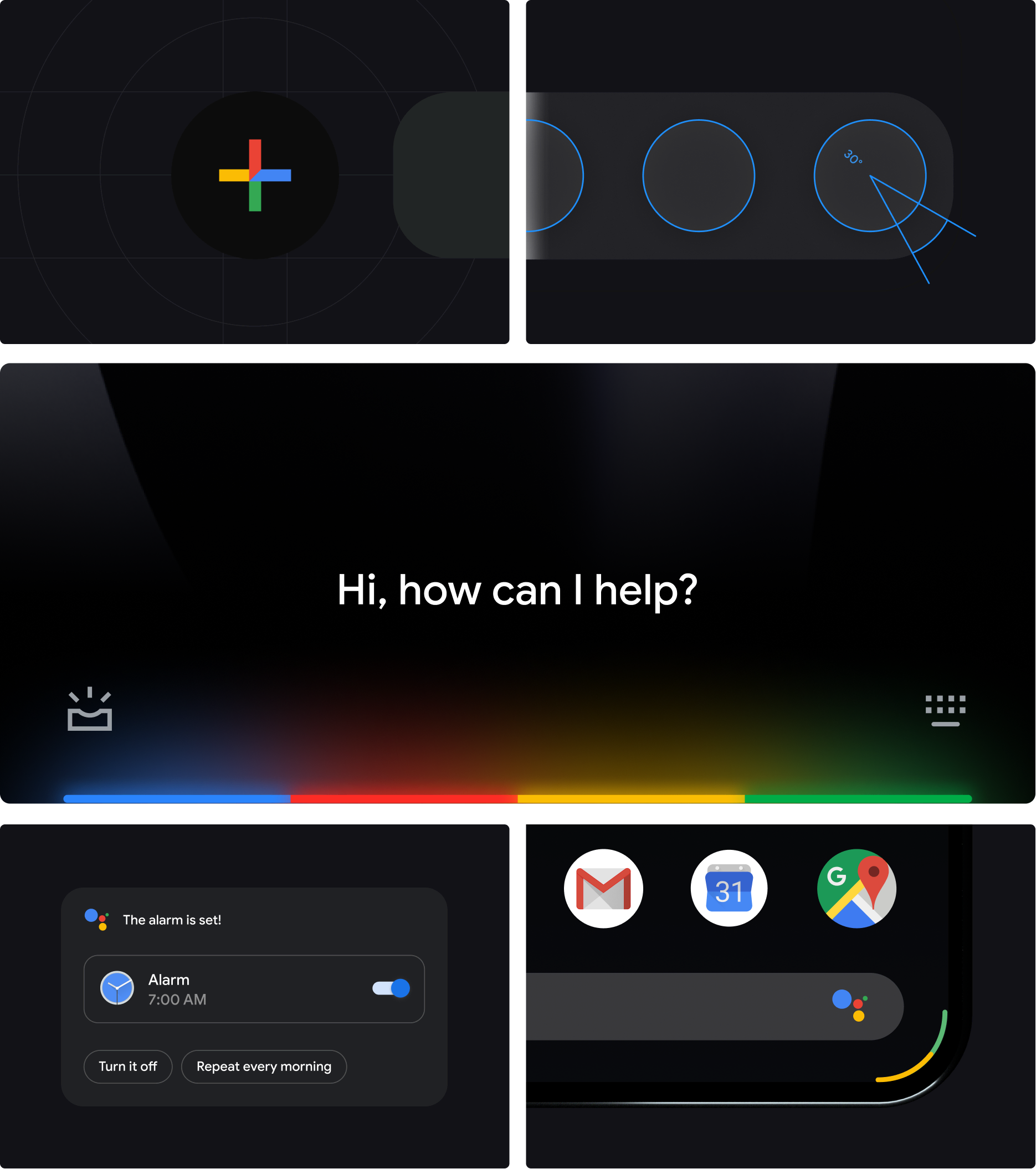

The Google Logo Family with its voice enabled system served as a foundation to build many Assistant products for a wide range of devices.

We re-visited and evolved the system in order to pave the way for a more continuous and ephemeral style of interaction. The aspirational goal was for Assistant to feel like extension of the hardware, rather than dots floating on top of your apps.

-

GM Blue 500

- RGB — 66 133 244

- CMYK 88 40 0 0

- HEX — #4285F4

-

GM Red 500

- RGB — 234 67 53

- CMYK — 0 87 89 0

- HEX — #EA4335

-

GM Yellow 500

- RGB — 251 188 4

- CMYK — 0 37 100 0

- HEX — #FBBC05

-

GM Green 500

- RGB — 52 168 83

- CMYK 85 0 92 0

- HEX — #34A853

The result was what we referred to as edge glow. A natural blend of the rigid structure of Google (the color segments) with the aspirational metaphor of light and ephemerality (glow).

We insisted on the UI to match the corner radius of the device. A simple detail that turned out to be more challenging than we had anticipated.

Answer system

NGA allows Assistant to be invoked over applications. In order to prevent obscuring valuable screen estate with unnecessarily large answer cards, we rethought some of key answer sets to not take over the entire screen.

Looking back, it was insightful to see how the early conceptual thinking of continued conversation materialized in the product.

NGA is a first step towards bringing Assistant closer to the Operating System. I look forward to seeing how the system and the expression of AI evolves.

You can watch the Pixel 4 trailer here.

Special thanks to Jonathan Lee, Andy Gugel, James O’Leary, Remington McElhaney, and the NGA core team.